Newton wrote, “My brain never hurt more than in my studies of the moon [and Earth and Sun]”. Unsurprising sentiment, as the seemingly simple three-body problem is intrinsically intractable and practically unpredictable. … If chaos is a nonlinear “super power”, enabling deterministic dynamics to be practically unpredictable, then the Hamiltonian is a neural network “secret sauce”, a special ingredient that enables learning and forecasting order and chaos.

So begins an article I co-authored with NAIL, the Nonlinear Artificial Intelligence Lab at NCSU, which appears today in Physical Review E.

Inspired by how brains work, artificial neural networks are powerful computational tools. Natural neurons exchange electrical impulses according to the strengths of their connections. Artificial neural networks mimic this behavior by adjusting numerical weights and biases during training sessions to minimize the difference between their actual and desired outputs.

From cancer diagnoses to self-driving cars to game playing, neural networks are revolutionizing our world. But although they are universal approximators, their approximations may require exponentially many neurons. In particular, they can be confounded by the mix of order and chaos in natural and artificial phenomena.

NAIL’s solution to this “chaos blindness” exploits an elegant and deep structure to everyday movement discovered by William Rowan Hamilton, who remarkably re-imagined Isaac Newton’s laws of motion as an incompressible energy-conserving flow in an abstract, higher-dimensional space of positions and momenta.

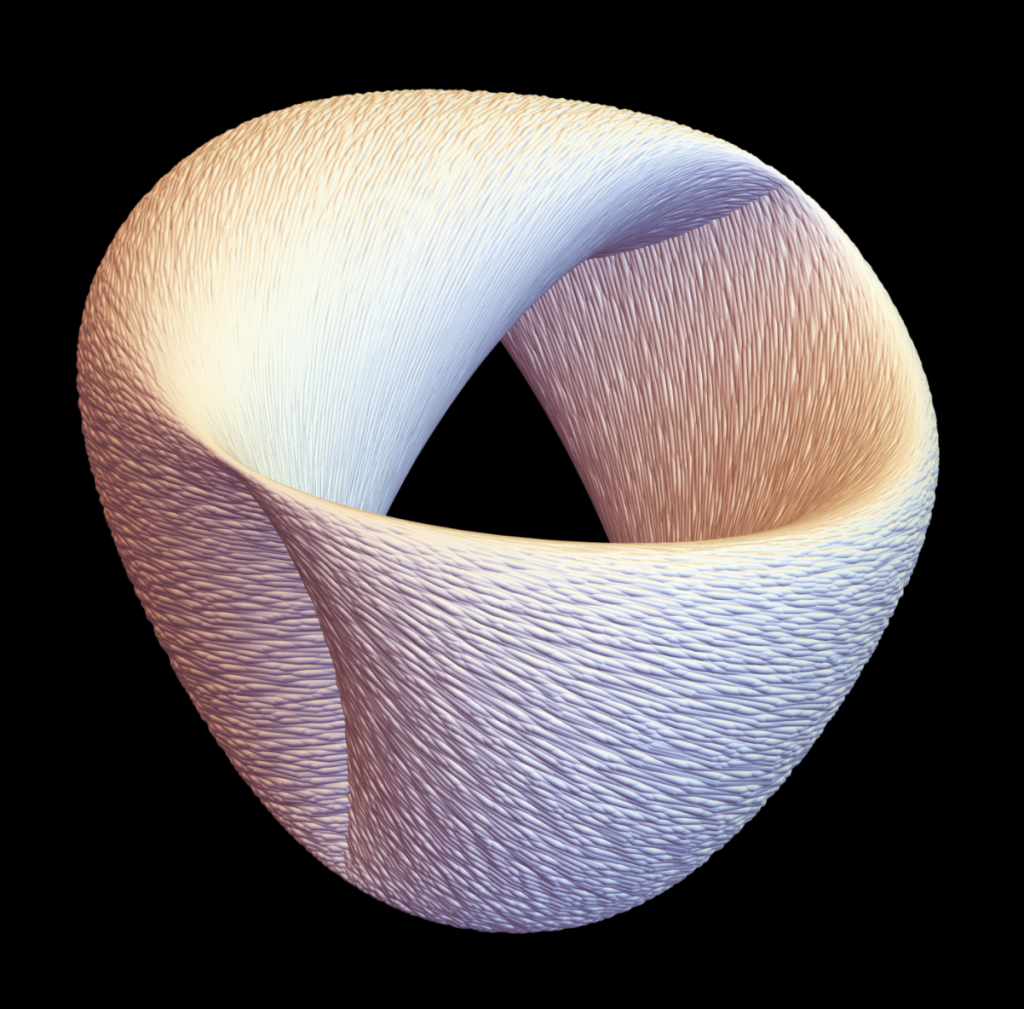

In this phase space, any motion is a unique trajectory confined to a constant-energy surface, and regular motion is further confined to a donut-like hypertorus. This structure constrains our Hamiltonian neural networks to properly forecast systems that mix order and chaos.

Thanks, Mark! I enjoy reading your posts as well.